mirror of

https://github.com/codeflash-ai/codeflash-internal.git

synced 2026-05-04 18:25:18 +00:00

delete the previous cli code

This commit is contained in:

parent

13e608cd82

commit

16d1a9146b

222 changed files with 0 additions and 30013 deletions

|

|

@ -1,57 +0,0 @@

|

|||

# Codeflash

|

||||

|

||||

Codeflash is an AI optimization tool that automatically improves the performance of your Python code while maintaining its correctness.

|

||||

|

||||

|

||||

|

||||

## Features

|

||||

|

||||

- Automatically optimizes your code using AI

|

||||

- Maintains code correctness through extensive testing

|

||||

- Opens a pull request for each optimization

|

||||

- Continuously optimizes your codebase through CI

|

||||

- Dynamically optimizes your real workflows through tracing

|

||||

|

||||

## Installation

|

||||

|

||||

To install Codeflash, run:

|

||||

|

||||

```

|

||||

pip install codeflash

|

||||

```

|

||||

|

||||

## Quick Start

|

||||

|

||||

1. Configure Codeflash for your project:

|

||||

```

|

||||

codeflash init

|

||||

```

|

||||

|

||||

2. Optimize a function:

|

||||

```

|

||||

codeflash --file path/to/your/file.py --function function_name

|

||||

```

|

||||

|

||||

3. Optimize your entire codebase:

|

||||

```

|

||||

codeflash --all

|

||||

```

|

||||

|

||||

## Getting the Best Results

|

||||

|

||||

To get the most out of Codeflash:

|

||||

|

||||

1. Install the Github App and actions workflow

|

||||

2. Find optimizations on your whole codebase with codeflash --all

|

||||

3. Find and optimize bottlenecks with the Codeflash Tracer

|

||||

4. Review the PRs Codeflash opens

|

||||

|

||||

|

||||

## Learn More

|

||||

|

||||

- [Codeflash Website](https://www.codeflash.ai)

|

||||

- [Documentation](https://docs.codeflash.ai)

|

||||

|

||||

## License

|

||||

|

||||

Codeflash is licensed under the BSL-1.1 License. See the LICENSE file for details.

|

||||

|

|

@ -1,55 +0,0 @@

|

|||

from __future__ import annotations

|

||||

|

||||

from sqlalchemy import ForeignKey, Integer, String, create_engine

|

||||

from sqlalchemy.engine.base import Engine

|

||||

from sqlalchemy.orm import (

|

||||

DeclarativeBase,

|

||||

Mapped,

|

||||

Relationship,

|

||||

Session,

|

||||

mapped_column,

|

||||

relationship,

|

||||

sessionmaker,

|

||||

)

|

||||

|

||||

|

||||

# Custom base class

|

||||

class Base(DeclarativeBase):

|

||||

pass

|

||||

|

||||

|

||||

engine: Engine = create_engine('sqlite:///example.db')

|

||||

|

||||

session_factory = sessionmaker(bind=engine)

|

||||

session: Session = session_factory()

|

||||

|

||||

|

||||

class User(Base):

|

||||

__tablename__: str = 'users'

|

||||

id: Mapped[int] = mapped_column(Integer, primary_key=True)

|

||||

name: Mapped[str] = mapped_column(String)

|

||||

posts: Relationship[list[Post]] = relationship("Post", order_by="Post.id", back_populates="user")

|

||||

|

||||

|

||||

class Post(Base):

|

||||

__tablename__: str = 'posts'

|

||||

id: Mapped[int] = mapped_column(Integer, primary_key=True)

|

||||

title: Mapped[str] = mapped_column(String)

|

||||

user_id: Mapped[int] = mapped_column(Integer, ForeignKey('users.id'))

|

||||

user: Relationship[User] = relationship("User", back_populates="posts")

|

||||

|

||||

|

||||

Base.metadata.create_all(engine)

|

||||

|

||||

|

||||

def get_user_posts() -> dict[User, list[Post]]:

|

||||

users: list[User] = session.query(User).all() # Query all users

|

||||

user_posts: dict[User, list[Post]] = {}

|

||||

for u in users:

|

||||

user_posts[u] = session.query(Post).filter(Post.user_id == u.id).all()

|

||||

return user_posts

|

||||

|

||||

|

||||

# Example usage

|

||||

for user, posts in get_user_posts().items():

|

||||

print(f"User: {user.name}, Posts: {[post.title for post in posts]}")

|

||||

|

|

@ -1,109 +0,0 @@

|

|||

from time import time

|

||||

from typing import List

|

||||

|

||||

from sqlalchemy import Boolean, Column, ForeignKey, Integer, Text, func

|

||||

from sqlalchemy.engine import Engine, create_engine

|

||||

from sqlalchemy.orm import DeclarativeBase, Session, relationship, sessionmaker

|

||||

from sqlalchemy.orm.relationships import Relationship

|

||||

|

||||

POSTGRES_CONNECTION_STRING: str = ("postgresql://cf_developer:XJcbU37MBYeh4dDK6PTV5n@sqlalchemy-experiments.postgres"

|

||||

".database.azure.com:5432/postgres")

|

||||

|

||||

|

||||

class Base(DeclarativeBase):

|

||||

pass

|

||||

|

||||

|

||||

class Author(Base):

|

||||

__tablename__: str = "authors"

|

||||

|

||||

id: Column[int] = Column(Integer, primary_key=True)

|

||||

name: Column[str] = Column(Text, nullable=False)

|

||||

|

||||

|

||||

class Book(Base):

|

||||

__tablename__: str = "books"

|

||||

|

||||

id: Column[int] = Column(Integer, primary_key=True)

|

||||

title: Column[str] = Column(Text, nullable=False)

|

||||

author_id: Column[int] = Column(Integer, ForeignKey("authors.id"), nullable=False)

|

||||

is_bestseller: Column[bool] = Column(Boolean, default=False)

|

||||

|

||||

author: Relationship[Author] = relationship("Author", backref="books")

|

||||

|

||||

|

||||

def init_table() -> Session:

|

||||

catalog_engine: Engine = create_engine(POSTGRES_CONNECTION_STRING, echo=True)

|

||||

session: Session = sessionmaker(bind=catalog_engine)()

|

||||

i: int

|

||||

for i in range(50):

|

||||

author: Author = Author(id=i, name=f"author{i}")

|

||||

session.add(author)

|

||||

for i in range(100000):

|

||||

book: Book = Book(id=i, title=f"book{i}", author_id=i % 50, is_bestseller=i % 2 == 0)

|

||||

session.add(book)

|

||||

session.commit()

|

||||

|

||||

return session

|

||||

|

||||

|

||||

def get_authors(books: list[Book]) -> list[Author]:

|

||||

_authors: list[Author] = []

|

||||

book: Book

|

||||

for book in books:

|

||||

_authors.append(book.author)

|

||||

return sorted(

|

||||

list(set(_authors)),

|

||||

key=lambda x: x.id,

|

||||

)

|

||||

|

||||

def get_authors2(num_authors) -> list[Author]:

|

||||

engine: Engine = create_engine(POSTGRES_CONNECTION_STRING, echo=True)

|

||||

session_factory: sessionmaker[Session] = sessionmaker(bind=engine)

|

||||

session: Session = session_factory()

|

||||

books: list[Book] = session.query(Book).all()

|

||||

_authors: list[Author] = []

|

||||

book: Book

|

||||

for book in books:

|

||||

_authors.append(book.author)

|

||||

return sorted(

|

||||

list(set(_authors)),

|

||||

key=lambda x: x.id,

|

||||

)[:num_authors]

|

||||

|

||||

|

||||

def get_top_author(authors: List[Author]) -> Author:

|

||||

engine: Engine = create_engine(POSTGRES_CONNECTION_STRING, echo=True)

|

||||

session_factory: sessionmaker[Session] = sessionmaker(bind=engine)

|

||||

session: Session = session_factory()

|

||||

|

||||

# Step 1: Initialize variables to keep track of the author with the maximum bestsellers

|

||||

max_bestsellers = 0

|

||||

top_author = None

|

||||

|

||||

# Step 2: Iterate over each author to count their bestsellers

|

||||

for author in authors:

|

||||

bestseller_count = (

|

||||

session.query(func.count(Book.id))

|

||||

.filter(Book.author_id == author.id, Book.is_bestseller == True)

|

||||

.scalar()

|

||||

)

|

||||

|

||||

# Step 3: Update the author with the maximum bestsellers

|

||||

if bestseller_count > max_bestsellers:

|

||||

max_bestsellers = bestseller_count

|

||||

top_author = author

|

||||

|

||||

return top_author

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

engine: Engine = create_engine(POSTGRES_CONNECTION_STRING, echo=True)

|

||||

session_factory: sessionmaker[Session] = sessionmaker(bind=engine)

|

||||

_session: Session = session_factory()

|

||||

_t: float = time()

|

||||

authors: list[Author] = get_authors(_session)

|

||||

print("TIME TAKEN", time() - _t)

|

||||

authors_name = list(map(lambda x: x.name, authors))

|

||||

print("len(authors_name)", len(authors_name))

|

||||

print(set(authors_name))

|

||||

|

|

@ -1,32 +0,0 @@

|

|||

from __future__ import annotations

|

||||

|

||||

from typing import Any, cast

|

||||

|

||||

from _typeshed import SupportsDunderGT, SupportsDunderLT

|

||||

from sqlalchemy.orm import Session

|

||||

|

||||

from code_to_optimize.book_catalog import (

|

||||

POSTGRES_CONNECTION_STRING,

|

||||

Author,

|

||||

Base,

|

||||

Book,

|

||||

_session,

|

||||

_t,

|

||||

authors,

|

||||

authors_name,

|

||||

engine,

|

||||

init_table,

|

||||

session_factory,

|

||||

)

|

||||

|

||||

|

||||

def get_authors(session: Session) -> list[Author]:

|

||||

books: list[Book] = session.query(Book).all()

|

||||

_authors: list[Author] = []

|

||||

book: Book

|

||||

for book in books:

|

||||

_authors.append(book.author)

|

||||

return sorted(

|

||||

list(set(_authors)),

|

||||

key=lambda x: cast(SupportsDunderLT[Any] | SupportsDunderGT[Any], x.id),

|

||||

)

|

||||

|

|

@ -1,23 +0,0 @@

|

|||

from __future__ import annotations

|

||||

|

||||

from code_to_optimize.book_catalog import (

|

||||

POSTGRES_CONNECTION_STRING,

|

||||

Author,

|

||||

Base,

|

||||

Book,

|

||||

_session,

|

||||

_t,

|

||||

authors,

|

||||

authors_name,

|

||||

engine,

|

||||

init_table,

|

||||

session_factory,

|

||||

)

|

||||

|

||||

|

||||

def get_authors(session):

|

||||

books = session.query(Book).all()

|

||||

_authors = []

|

||||

for book in books:

|

||||

_authors.append(book.author)

|

||||

return sorted(list(set(_authors)), key=lambda x: x.id)

|

||||

|

|

@ -1,8 +0,0 @@

|

|||

def sorter(arr):

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

|

@ -1,6 +0,0 @@

|

|||

def sorter(arr):

|

||||

arr.sort()

|

||||

return arr

|

||||

|

||||

|

||||

CACHED_TESTS = "import unittest\ndef sorter(arr):\n for i in range(len(arr)):\n for j in range(len(arr) - 1):\n if arr[j] > arr[j + 1]:\n temp = arr[j]\n arr[j] = arr[j + 1]\n arr[j + 1] = temp\n return arr\nclass SorterTestCase(unittest.TestCase):\n def test_empty_list(self):\n self.assertEqual(sorter([]), [])\n def test_single_element_list(self):\n self.assertEqual(sorter([5]), [5])\n def test_ascending_order_list(self):\n self.assertEqual(sorter([1, 2, 3, 4, 5]), [1, 2, 3, 4, 5])\n def test_descending_order_list(self):\n self.assertEqual(sorter([5, 4, 3, 2, 1]), [1, 2, 3, 4, 5])\n def test_random_order_list(self):\n self.assertEqual(sorter([3, 1, 4, 2, 5]), [1, 2, 3, 4, 5])\n def test_duplicate_elements_list(self):\n self.assertEqual(sorter([3, 1, 4, 2, 2, 5, 1]), [1, 1, 2, 2, 3, 4, 5])\n def test_negative_numbers_list(self):\n self.assertEqual(sorter([-5, -2, -8, -1, -3]), [-8, -5, -3, -2, -1])\n def test_mixed_data_types_list(self):\n self.assertEqual(sorter(['apple', 2, 'banana', 1, 'cherry']), [1, 2, 'apple', 'banana', 'cherry'])\n def test_large_input_list(self):\n self.assertEqual(sorter(list(range(1000, 0, -1))), list(range(1, 1001)))\n def test_list_with_none_values(self):\n self.assertEqual(sorter([None, 2, None, 1, None]), [None, None, None, 1, 2])\n def test_list_with_nan_values(self):\n self.assertEqual(sorter([float('nan'), 2, float('nan'), 1, float('nan')]), [1, 2, float('nan'), float('nan'), float('nan')])\n def test_list_with_complex_numbers(self):\n self.assertEqual(sorter([3 + 2j, 1 + 1j, 4 + 3j, 2 + 1j, 5 + 4j]), [1 + 1j, 2 + 1j, 3 + 2j, 4 + 3j, 5 + 4j])\n def test_list_with_custom_class_objects(self):\n class Person:\n def __init__(self, name, age):\n self.name = name\n self.age = age\n def __repr__(self):\n return f\"Person('{self.name}', {self.age})\"\n input_list = [Person('Alice', 25), Person('Bob', 30), Person('Charlie', 20)]\n expected_output = [Person('Charlie', 20), Person('Alice', 25), Person('Bob', 30)]\n self.assertEqual(sorter(input_list), expected_output)\n def test_list_with_uncomparable_elements(self):\n with self.assertRaises(TypeError):\n sorter([5, 'apple', 3, [1, 2, 3], 2])\n def test_list_with_custom_comparison_function(self):\n input_list = [5, 4, 3, 2, 1]\n expected_output = [5, 4, 3, 2, 1]\n self.assertEqual(sorter(input_list, reverse=True), expected_output)\nif __name__ == '__main__':\n unittest.main()"

|

||||

|

|

@ -1,8 +0,0 @@

|

|||

def sorter(arr):

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

|

@ -1,2 +0,0 @@

|

|||

def dep1_comparer(arr, j: int) -> bool:

|

||||

return arr[j] > arr[j + 1]

|

||||

|

|

@ -1,4 +0,0 @@

|

|||

def dep2_swap(arr, j):

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

|

|

@ -1,150 +0,0 @@

|

|||

from code_to_optimize.bubble_sort_dep1_helper import dep1_comparer

|

||||

from code_to_optimize.bubble_sort_dep2_swap import dep2_swap

|

||||

|

||||

|

||||

def sorter_deps(arr):

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if dep1_comparer(arr, j):

|

||||

dep2_swap(arr, j)

|

||||

return arr

|

||||

|

||||

|

||||

CACHED_TESTS = """import dill as pickle

|

||||

import os

|

||||

def _log__test__values(values, duration, test_name):

|

||||

iteration = os.environ["CODEFLASH_TEST_ITERATION"]

|

||||

with open(os.path.join(

|

||||

'/var/folders/ms/1tz2l1q55w5b7pp4wpdkbjq80000gn/T/codeflash_jk4pzz3w/',

|

||||

f'test_return_values_{iteration}.bin'), 'ab') as f:

|

||||

return_bytes = pickle.dumps(values)

|

||||

_test_name = f"{test_name}".encode("ascii")

|

||||

f.write(len(_test_name).to_bytes(4, byteorder='big'))

|

||||

f.write(_test_name)

|

||||

f.write(duration.to_bytes(8, byteorder='big'))

|

||||

f.write(len(return_bytes).to_bytes(4, byteorder='big'))

|

||||

f.write(return_bytes)

|

||||

import time

|

||||

import gc

|

||||

from code_to_optimize.bubble_sort_deps import sorter_deps

|

||||

import timeout_decorator

|

||||

import unittest

|

||||

|

||||

def dep1_comparer(arr, j: int) -> bool:

|

||||

return arr[j] > arr[j + 1]

|

||||

|

||||

def dep2_swap(arr, j):

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

|

||||

class TestSorterDeps(unittest.TestCase):

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_integers(self):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([5, 3, 2, 4, 1])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(

|

||||

return_value, duration,

|

||||

'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_integers:sorter_deps:0')

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([10, -3, 0, 2, 7])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(

|

||||

return_value, duration,

|

||||

('code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:'

|

||||

'TestSorterDeps.test_integers:sorter_deps:1'))

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_floats(self):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([3.2, 1.5, 2.7, 4.1, 1.0])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration,

|

||||

'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_floats:sorter_deps:0')

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([-1.1, 0.0, 3.14, 2.71, -0.5])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration,

|

||||

'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_floats:sorter_deps:1')

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_identical_elements(self):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([1, 1, 1, 1, 1])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration,

|

||||

('code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:'

|

||||

'TestSorterDeps.test_identical_elements:sorter_deps:0'))

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([3.14, 3.14, 3.14])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration,

|

||||

('code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:'

|

||||

'TestSorterDeps.test_identical_elements:sorter_deps:1'))

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_single_element(self):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([5])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration, 'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_single_element:sorter_deps:0')

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([-3.2])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration, 'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_single_element:sorter_deps:1')

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_empty_array(self):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration, 'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_empty_array:sorter_deps:0')

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_strings(self):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps(['apple', 'banana', 'cherry', 'date'])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration, 'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_strings:sorter_deps:0')

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps(['dog', 'cat', 'elephant', 'ant'])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration, 'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_strings:sorter_deps:1')

|

||||

|

||||

@timeout_decorator.timeout(15, use_signals=True)

|

||||

def test_mixed_types(self):

|

||||

with self.assertRaises(TypeError):

|

||||

gc.disable()

|

||||

counter = time.perf_counter_ns()

|

||||

return_value = sorter_deps([1, 'two', 3.0, 'four'])

|

||||

duration = time.perf_counter_ns() - counter

|

||||

gc.enable()

|

||||

_log__test__values(return_value, duration, 'code_to_optimize.tests.unittest.test_sorter_deps__unit_test_0:TestSorterDeps.test_mixed_types:sorter_deps:0_0')

|

||||

if __name__ == '__main__':

|

||||

unittest.main()

|

||||

|

||||

"""

|

||||

|

|

@ -1,6 +0,0 @@

|

|||

from code_to_optimize.bubble_sort import sorter

|

||||

|

||||

|

||||

def sort_from_another_file(arr):

|

||||

sorted_arr = sorter(arr)

|

||||

return sorted_arr

|

||||

|

|

@ -1,18 +0,0 @@

|

|||

def hi():

|

||||

pass

|

||||

|

||||

|

||||

class BubbleSortClass:

|

||||

def __init__(self):

|

||||

pass

|

||||

|

||||

def sorter(self, arr):

|

||||

n = len(arr)

|

||||

for i in range(n):

|

||||

for j in range(0, n - i - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

arr[j], arr[j + 1] = arr[j + 1], arr[j]

|

||||

return arr

|

||||

|

||||

def helper(self, arr, j):

|

||||

return arr[j] > arr[j + 1]

|

||||

|

|

@ -1,26 +0,0 @@

|

|||

def hi():

|

||||

pass

|

||||

|

||||

|

||||

class WrapperClass:

|

||||

def __init__(self):

|

||||

pass

|

||||

|

||||

class BubbleSortClass:

|

||||

def __init__(self):

|

||||

pass

|

||||

|

||||

def sorter(self, arr):

|

||||

def inner_helper(arr, j):

|

||||

return arr[j] > arr[j + 1]

|

||||

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

||||

def helper(self, arr, j):

|

||||

return arr[j] > arr[j + 1]

|

||||

|

|

@ -1,12 +0,0 @@

|

|||

class BubbleSorter:

|

||||

def __init__(self, x=0):

|

||||

self.x = x

|

||||

|

||||

def sorter(self, arr):

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

|

@ -1,8 +0,0 @@

|

|||

def sorter(arr: list[int]) -> list[int]:

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

|

@ -1,7 +0,0 @@

|

|||

[tool.codeflash]

|

||||

disable-imports-sorting = true

|

||||

disable-telemetry = true

|

||||

formatter-cmds = ["ruff check --exit-zero --fix $file", "ruff format $file"]

|

||||

module-root = "src/aviary"

|

||||

test-framework = "pytest"

|

||||

tests-root = "tests"

|

||||

|

|

@ -1,14 +0,0 @@

|

|||

from __future__ import annotations

|

||||

|

||||

|

||||

def find_common_tags(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

i = 0

|

||||

for _ in range(1000000):

|

||||

i += 1

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article["tags"])

|

||||

return common_tags

|

||||

|

|

@ -1,22 +0,0 @@

|

|||

from aviary.common_tags import find_common_tags

|

||||

|

||||

|

||||

def test_common_tags_1() -> None:

|

||||

articles_1 = [

|

||||

{"title": "Article 1", "tags": ["Python", "AI", "ML"]},

|

||||

{"title": "Article 2", "tags": ["Python", "Data Science", "AI"]},

|

||||

{"title": "Article 3", "tags": ["Python", "AI", "Big Data"]},

|

||||

]

|

||||

|

||||

expected = {"Python", "AI"}

|

||||

|

||||

assert find_common_tags(articles_1) == expected

|

||||

|

||||

articles_2 = [

|

||||

{"title": "Article 1", "tags": ["Python", "AI", "ML"]},

|

||||

{"title": "Article 2", "tags": ["Python", "Data Science", "AI"]},

|

||||

{"title": "Article 3", "tags": ["Python", "AI", "Big Data"]},

|

||||

{"title": "Article 4", "tags": ["Python", "AI", "ML"]},

|

||||

]

|

||||

|

||||

assert find_common_tags(articles_2) == expected

|

||||

|

|

@ -1,47 +0,0 @@

|

|||

def sorter_one_level_depth(arr):

|

||||

return sorter(arr)

|

||||

|

||||

|

||||

def sorter(arr):

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

||||

async def decompress_braces(string):

|

||||

numbers = "123456789"

|

||||

stack = []

|

||||

|

||||

for char in string:

|

||||

if char in numbers:

|

||||

stack.append(int(char))

|

||||

elif char == "{":

|

||||

continue

|

||||

elif "a" <= char <= "z" or "A" <= char <= "Z":

|

||||

stack.append(char)

|

||||

elif char == "}":

|

||||

segment = ""

|

||||

while isinstance(stack[-1], str):

|

||||

popped_char = stack.pop()

|

||||

segment = popped_char + segment

|

||||

num = stack.pop()

|

||||

stack.append(segment * num)

|

||||

return "".join(stack)

|

||||

|

||||

|

||||

async def sorter_one_level_depth_lower(arr):

|

||||

return sorter(arr)

|

||||

|

||||

|

||||

|

||||

def add_one_level_depth(a, b):

|

||||

return add(a, b)

|

||||

|

||||

def add(a, b):

|

||||

return a + b

|

||||

|

||||

def multiply_and_add(a, b, c):

|

||||

return a * b + c

|

||||

|

|

@ -1,7 +0,0 @@

|

|||

[tool.codeflash]

|

||||

# All paths are relative to this pyproject.toml's directory.

|

||||

module-root = "."

|

||||

tests-root = "tests"

|

||||

test-framework = "pytest"

|

||||

ignore-paths = []

|

||||

formatter-cmds = ["black $file"]

|

||||

|

|

@ -1,40 +0,0 @@

|

|||

import pytest

|

||||

from bubble_sort import sorter, sorter_one_level_depth, add_one_level_depth, add, multiply_and_add

|

||||

|

||||

|

||||

def test_sort():

|

||||

input = [5, 4, 3, 2, 1, 0]

|

||||

output = sorter(input)

|

||||

assert output == [0, 1, 2, 3, 4, 5]

|

||||

|

||||

input = [5.0, 4.0, 3.0, 2.0, 1.0, 0.0]

|

||||

output = sorter(input)

|

||||

assert output == [0.0, 1.0, 2.0, 3.0, 4.0, 5.0]

|

||||

|

||||

input = list(reversed(range(5000)))

|

||||

output = sorter(input)

|

||||

assert output == list(range(5000))

|

||||

|

||||

def test_sorter_one_level_depth():

|

||||

input = [3, 2, 1]

|

||||

output = sorter_one_level_depth(input)

|

||||

assert output == [1, 2, 3]

|

||||

|

||||

|

||||

def test_add_one_level_depth():

|

||||

assert add_one_level_depth(1, 2) == 3

|

||||

assert add_one_level_depth(-1, 1) == 0

|

||||

assert add_one_level_depth(0, 0) == 0

|

||||

|

||||

|

||||

def test_add():

|

||||

assert add(1, 2) == 3

|

||||

assert add(-1, 1) == 0

|

||||

assert add(0, 0) == 0

|

||||

|

||||

|

||||

def test_multiply_and_add():

|

||||

assert multiply_and_add(2, 3, 4) == 10

|

||||

assert multiply_and_add(0, 3, 4) == 4

|

||||

assert multiply_and_add(-1, 3, 4) == 1

|

||||

assert multiply_and_add(2, 0, 4) == 4

|

||||

|

|

@ -1,6 +0,0 @@

|

|||

from bubble_sort_with_math import sorter

|

||||

|

||||

|

||||

def sort_from_another_file(arr):

|

||||

sorted_arr = sorter(arr)

|

||||

return sorted_arr

|

||||

|

|

@ -1,8 +0,0 @@

|

|||

import math

|

||||

|

||||

|

||||

def sorter(arr):

|

||||

arr.sort()

|

||||

x = math.sqrt(2)

|

||||

print(x)

|

||||

return arr

|

||||

|

|

@ -1,2 +0,0 @@

|

|||

# Define a global variable

|

||||

API_URL = "https://api.example.com/data"

|

||||

|

|

@ -1,37 +0,0 @@

|

|||

import requests # Third-party library

|

||||

from globals import API_URL # Global variable defined in another file

|

||||

from utils import DataProcessor

|

||||

|

||||

|

||||

def fetch_and_process_data():

|

||||

# Use the global variable for the request

|

||||

response = requests.get(API_URL)

|

||||

response.raise_for_status()

|

||||

|

||||

raw_data = response.text

|

||||

|

||||

# Use code from another file (utils.py)

|

||||

processor = DataProcessor()

|

||||

processed = processor.process_data(raw_data)

|

||||

processed = processor.add_prefix(processed)

|

||||

|

||||

return processed

|

||||

|

||||

|

||||

def fetch_and_transform_data():

|

||||

# Use the global variable for the request

|

||||

response = requests.get(API_URL)

|

||||

|

||||

raw_data = response.text

|

||||

|

||||

# Use code from another file (utils.py)

|

||||

processor = DataProcessor()

|

||||

processed = processor.process_data(raw_data)

|

||||

transformed = processor.transform_data(processed)

|

||||

|

||||

return transformed

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

result = fetch_and_process_data()

|

||||

print("Processed data:", result)

|

||||

|

|

@ -1,27 +0,0 @@

|

|||

from code_to_optimize.code_directories.retriever.utils import DataProcessor

|

||||

|

||||

|

||||

class DataTransformer:

|

||||

def __init__(self):

|

||||

self.data = None

|

||||

|

||||

def transform(self, data):

|

||||

self.data = data

|

||||

return self.data

|

||||

|

||||

def transform_using_own_method(self, data):

|

||||

return self.transform(data)

|

||||

|

||||

def transform_using_same_file_function(self, data):

|

||||

return update_data(data)

|

||||

|

||||

def transform_data_all_same_file(self, data):

|

||||

new_data = update_data(data)

|

||||

return self.transform_using_own_method(new_data)

|

||||

|

||||

def circular_dependency(self, data):

|

||||

return DataProcessor().circular_dependency(data)

|

||||

|

||||

|

||||

def update_data(data):

|

||||

return data + " updated"

|

||||

|

|

@ -1,47 +0,0 @@

|

|||

import math

|

||||

|

||||

from transform_utils import DataTransformer

|

||||

|

||||

GLOBAL_VAR = 10

|

||||

|

||||

|

||||

class DataProcessor:

|

||||

"""A class for processing data."""

|

||||

|

||||

number = 1

|

||||

|

||||

def __init__(self, default_prefix: str = "PREFIX_"):

|

||||

"""Initialize the DataProcessor with a default prefix."""

|

||||

self.default_prefix = default_prefix

|

||||

self.number += math.log(self.number)

|

||||

|

||||

def __repr__(self) -> str:

|

||||

"""Return a string representation of the DataProcessor."""

|

||||

return f"DataProcessor(default_prefix={self.default_prefix!r})"

|

||||

|

||||

def process_data(self, raw_data: str) -> str:

|

||||

"""Process raw data by converting it to uppercase."""

|

||||

return raw_data.upper()

|

||||

|

||||

def add_prefix(self, data: str, prefix: str = "PREFIX_") -> str:

|

||||

"""Add a prefix to the processed data."""

|

||||

return prefix + data

|

||||

|

||||

def do_something(self):

|

||||

print("something")

|

||||

|

||||

def transform_data(self, data: str) -> str:

|

||||

"""Transform the processed data"""

|

||||

return DataTransformer().transform(data)

|

||||

|

||||

def transform_data_own_method(self, data: str) -> str:

|

||||

"""Transform the processed data using own method"""

|

||||

return DataTransformer().transform_using_own_method(data)

|

||||

|

||||

def transform_data_same_file_function(self, data: str) -> str:

|

||||

"""Transform the processed data using a function from the same file"""

|

||||

return DataTransformer().transform_using_same_file_function(data)

|

||||

|

||||

def circular_dependency(self, data: str) -> str:

|

||||

"""Test circular dependency"""

|

||||

return DataTransformer().circular_dependency(data)

|

||||

|

|

@ -1,6 +0,0 @@

|

|||

[tool.codeflash]

|

||||

disable-telemetry = true

|

||||

formatter-cmds = ["ruff check --exit-zero --fix $file", "ruff format $file"]

|

||||

module-root = "."

|

||||

test-framework = "pytest"

|

||||

tests-root = "tests"

|

||||

|

|

@ -1,14 +0,0 @@

|

|||

def funcA(number):

|

||||

k = 0

|

||||

for i in range(number * 100):

|

||||

k += i

|

||||

# Simplify the for loop by using sum with a range object

|

||||

j = sum(range(number))

|

||||

|

||||

# Use a generator expression directly in join for more efficiency

|

||||

return " ".join(str(i) for i in range(number))

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

for i in range(10, 31, 10):

|

||||

funcA(10)

|

||||

|

|

@ -1,577 +0,0 @@

|

|||

from __future__ import annotations

|

||||

|

||||

from typing import Any, Callable, Iterable, NewType, Optional, Protocol, TypeVar

|

||||

|

||||

try:

|

||||

from typing import _TypingBase # type: ignore[attr-defined]

|

||||

except ImportError:

|

||||

from typing import _Final as _TypingBase # type: ignore[attr-defined]

|

||||

typing_base = _TypingBase

|

||||

|

||||

_T = TypeVar("_T")

|

||||

|

||||

|

||||

class Comparable(Protocol):

|

||||

def __lt__(self: _T, __other: _T) -> bool: ...

|

||||

|

||||

|

||||

ComparableT = TypeVar("ComparableT", bound=Comparable)

|

||||

|

||||

|

||||

def sorter(arr: list[ComparableT]) -> list[ComparableT]:

|

||||

for i in range(len(arr)):

|

||||

for j in range(len(arr) - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

temp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = temp

|

||||

return arr

|

||||

|

||||

|

||||

def sorter2(arr: list[ComparableT]) -> list[ComparableT]:

|

||||

n = len(arr)

|

||||

for i in range(n):

|

||||

swapped = False

|

||||

for j in range(n - i - 1):

|

||||

if arr[j] > arr[j + 1]:

|

||||

arr[j], arr[j + 1] = arr[j + 1], arr[j]

|

||||

swapped = True

|

||||

if not swapped:

|

||||

break

|

||||

return arr

|

||||

|

||||

|

||||

def sorter3(arr: list[ComparableT]) -> list[ComparableT]:

|

||||

arr.sort()

|

||||

return arr

|

||||

|

||||

|

||||

def is_valid_field_name(name: str) -> bool:

|

||||

return not name.startswith("_")

|

||||

|

||||

|

||||

def is_valid_field_name2(name: str) -> bool:

|

||||

return not (name and name[0] == "_")

|

||||

|

||||

|

||||

def is_self_type(tp: Any) -> bool:

|

||||

"""Check if a given class is a Self type (from `typing` or `typing_extensions`)"""

|

||||

return isinstance(tp, typing_base) and getattr(tp, "_name", None) == "Self"

|

||||

|

||||

|

||||

def is_self_type2(tp: Any) -> bool:

|

||||

"""Check if a given class is a Self type (from `typing` or `typing_extensions`)"""

|

||||

if not isinstance(tp, _TypingBase):

|

||||

return False

|

||||

return tp._name == "Self" if hasattr(tp, "_name") else False

|

||||

|

||||

|

||||

test_new_type = NewType("test_new_type", str)

|

||||

|

||||

|

||||

def is_new_type(type_: type[Any]) -> bool:

|

||||

"""Check whether type_ was created using typing.NewType.

|

||||

Can't use isinstance because it fails <3.10.

|

||||

"""

|

||||

return isinstance(type_, test_new_type.__class__) and hasattr(type_, "__supertype__") # type: ignore[arg-type]

|

||||

|

||||

|

||||

def is_new_type2(type_: type[Any]) -> bool:

|

||||

"""Check whether type_ was created using typing.NewType.

|

||||

Can't use isinstance because it fails <3.10.

|

||||

"""

|

||||

return type(type_) is type(test_new_type) and hasattr(type_, "__supertype__")

|

||||

|

||||

|

||||

def _to_str(

|

||||

size: int,

|

||||

suffixes: Iterable[str],

|

||||

base: int,

|

||||

*,

|

||||

precision: Optional[int] = 1,

|

||||

separator: Optional[str] = " ",

|

||||

) -> str:

|

||||

if size == 1:

|

||||

return "1 byte"

|

||||

elif size < base:

|

||||

return f"{size:,} bytes"

|

||||

|

||||

for i, suffix in enumerate(suffixes, 2): # noqa: B007

|

||||

unit = base**i

|

||||

if size < unit:

|

||||

break

|

||||

return "{:,.{precision}f}{separator}{}".format(

|

||||

(base * size / unit),

|

||||

suffix,

|

||||

precision=precision,

|

||||

separator=separator,

|

||||

)

|

||||

|

||||

|

||||

# Given: (size=-1, suffixes=(), base=-1, precision=0, separator=None),

|

||||

# code_to_optimize.bubble_sort_typed._to_str : raises UnboundLocalError("cannot access local variable 'unit' where it is not associated with a value")

|

||||

# code_to_optimize.bubble_sort_typed._to_str2 : raises IndexError()

|

||||

|

||||

|

||||

def _to_str2(

|

||||

size: int,

|

||||

suffixes: Iterable[str],

|

||||

base: int,

|

||||

*,

|

||||

precision: Optional[int] = 1,

|

||||

separator: Optional[str] = " ",

|

||||

) -> str:

|

||||

if size == 1:

|

||||

return "1 byte"

|

||||

elif size < base:

|

||||

return f"{size:,} bytes"

|

||||

|

||||

unit = base

|

||||

for suffix in suffixes:

|

||||

unit *= base

|

||||

if size < unit:

|

||||

return f"{size / (unit / base):,.{precision}f}{separator}{suffix}"

|

||||

|

||||

# Extra condition if size exceeds the largest unit

|

||||

return f"{size / (unit / base):,.{precision}f}{separator}{suffixes[-1]}"

|

||||

|

||||

|

||||

def find_common_tags(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

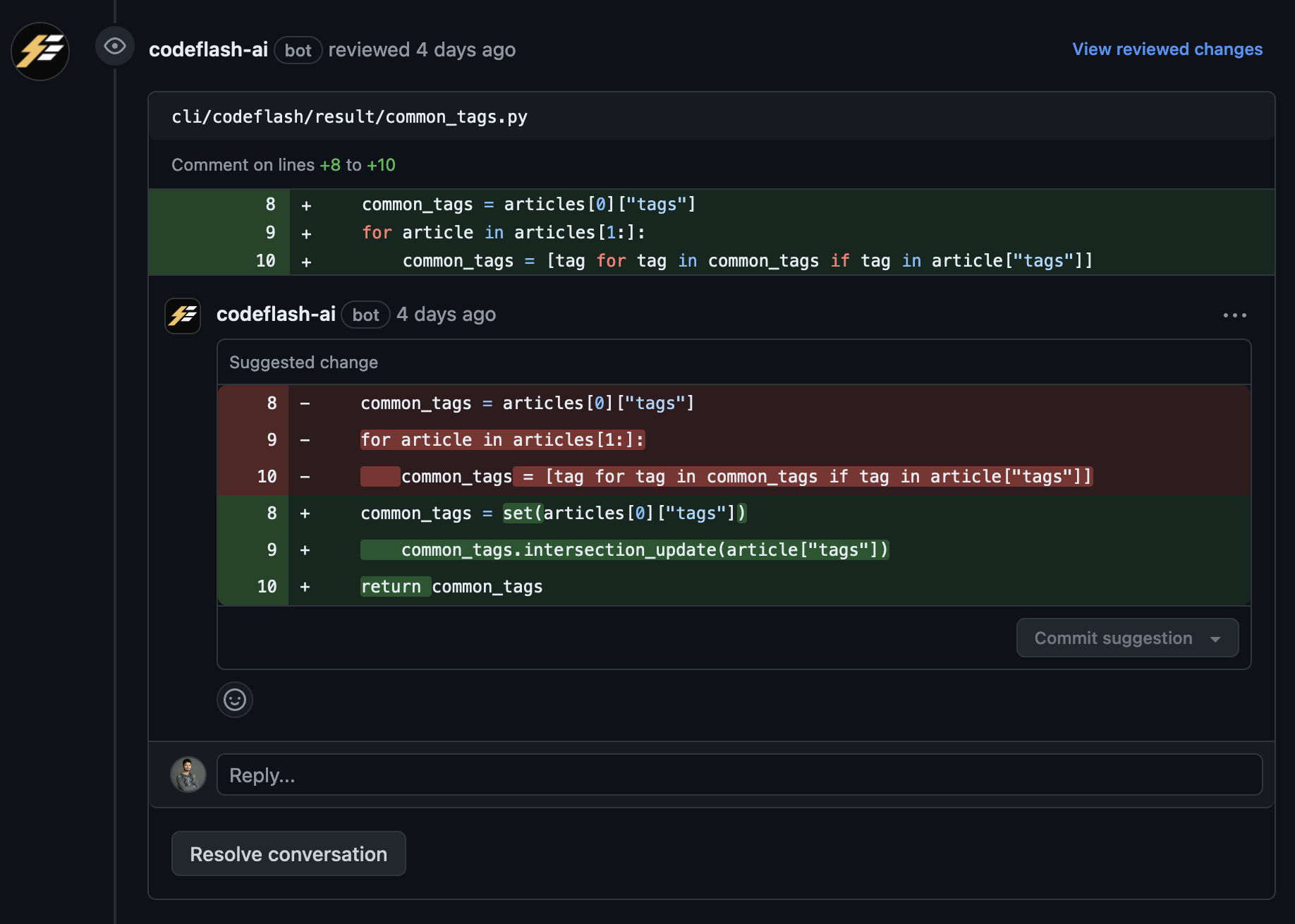

common_tags = articles[0]["tags"]

|

||||

for article in articles[1:]:

|

||||

common_tags = [tag for tag in common_tags if tag in article["tags"]]

|

||||

return set(common_tags)

|

||||

|

||||

|

||||

# crosshair diffbehavior --max_uninteresting_iterations 64 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags2

|

||||

# Given: (articles=[{'tags': ['', '']}, {'tags': ['', '']}, {'tags': []}, {}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : returns set()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags2 : raises KeyError()

|

||||

|

||||

|

||||

def find_common_tags2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article["tags"])

|

||||

return common_tags

|

||||

|

||||

|

||||

# Given: (articles=[{'\x00\x00\x00\x00': [], 'tags': ['']}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {'tags': ['']}, {}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : raises KeyError()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags2_1 : returns set()

|

||||

|

||||

|

||||

def find_common_tags2_1(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0].get("tags", []))

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article.get("tags", []))

|

||||

return common_tags

|

||||

|

||||

|

||||

# % crosshair diffbehavior --max_uninteresting_iterations 64 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags2_2

|

||||

# Given: (articles=[{'\x00\x00\x00\x00': [''], 'tags': ['']}, {'\x00\x00\x00\x00': [''], 'tags': ['']}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {'\x00\x00\x00\x00': [], '': []}, {'\x00\x00\x00\x00': [], 'tags': ['']}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : raises KeyError()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags2_2 : returns set()

|

||||

# (codeflash312) renaud@Renauds-Laptop codeflash %

|

||||

|

||||

|

||||

def find_common_tags2_2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

if not common_tags:

|

||||

break

|

||||

common_tags.intersection_update(article["tags"])

|

||||

return common_tags

|

||||

|

||||

|

||||

# % crosshair diffbehavior --max_uninteresting_iterations 128 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags2_3

|

||||

# Given: (articles=[{'tags': ['', '']}, {'tags': ['', '']}, {'tags': []}, {}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : returns set()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags2_3 : raises KeyError()

|

||||

# Given: (articles=[{'\x00\x00\x00\x00': [], 'tags': []}, {'\x00\x00\x00\x00': [], 'tags': []}, {'\x00\x00\x00\x00': [], 'tags': []}, {'\x00\x00\x00\x00': []}, {}, {}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : returns set()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags2_3 : raises KeyError()

|

||||

|

||||

|

||||

def find_common_tags2_3(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

article_tags = article["tags"] # Access 'tags' key to match KeyError behavior

|

||||

if not common_tags:

|

||||

continue # Skip intersection but maintain KeyError on missing 'tags'

|

||||

common_tags.intersection_update(article_tags)

|

||||

return common_tags

|

||||

|

||||

|

||||

def find_common_tags2_4(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

if common_tags:

|

||||

article_tags = article["tags"] # Access 'tags' only if common_tags is not empty

|

||||

common_tags.intersection_update(article_tags)

|

||||

else:

|

||||

# Do not access article["tags"]; no KeyError is raised

|

||||

pass

|

||||

return common_tags

|

||||

|

||||

|

||||

def find_common_tags2_5(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

# Initialize with the first article's tags, defaulting to an empty list if "tags" is missing

|

||||

common_tags = set(articles[0].get("tags", []))

|

||||

|

||||

for article in articles[1:]:

|

||||

# Use .get("tags", []) to safely access tags, defaulting to an empty list if missing

|

||||

common_tags.intersection_update(article.get("tags", []))

|

||||

|

||||

# Early exit if there are no common tags left

|

||||

if not common_tags:

|

||||

break

|

||||

|

||||

return common_tags

|

||||

|

||||

|

||||

def find_common_tags2_6(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

# Initialize with the first article's tags

|

||||

common_tags = set(articles[0]["tags"]) # Raises KeyError if "tags" is missing

|

||||

|

||||

for article in articles[1:]:

|

||||

# Directly access "tags", maintaining behavior

|

||||

common_tags.intersection_update(article["tags"])

|

||||

|

||||

# Early exit if no common tags remain

|

||||

if not common_tags:

|

||||

break

|

||||

|

||||

return common_tags

|

||||

|

||||

|

||||

def find_common_tags2_7(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

# Initialize with the first article's tags (raises KeyError if "tags" is missing)

|

||||

common_tags = set(articles[0]["tags"])

|

||||

|

||||

for article in articles[1:]:

|

||||

if not common_tags:

|

||||

# If no common tags remain, no need to process further

|

||||

break

|

||||

|

||||

# Access "tags" directly, maintaining original behavior (raises KeyError if missing)

|

||||

common_tags.intersection_update(article["tags"])

|

||||

|

||||

return common_tags

|

||||

|

||||

|

||||

def find_common_tags2_8(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

# Initialize with the first article's tags (raises KeyError if "tags" is missing)

|

||||

try:

|

||||

common_tags = set(articles[0]["tags"])

|

||||

except KeyError:

|

||||

raise KeyError("The first article is missing the 'tags' key.")

|

||||

|

||||

for index, article in enumerate(articles[1:], start=2):

|

||||

try:

|

||||

tags = article["tags"]

|

||||

except KeyError:

|

||||

raise KeyError(f"Article at position {index} is missing the 'tags' key.")

|

||||

|

||||

# Perform intersection with the current article's tags

|

||||

common_tags.intersection_update(tags)

|

||||

|

||||

return common_tags

|

||||

|

||||

|

||||

def find_common_tags2_9(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

# Initialize with the first article's tags (raises KeyError if "tags" is missing)

|

||||

common_tags = set(articles[0]["tags"])

|

||||

|

||||

for article in articles[1:]:

|

||||

if not common_tags:

|

||||

# If no common tags remain, no need to process further

|

||||

break

|

||||

# Directly access "tags", allowing KeyError to propagate naturally

|

||||

common_tags.intersection_update(article["tags"])

|

||||

|

||||

return common_tags

|

||||

|

||||

|

||||

# crosshair diffbehavior --max_uninteresting_iterations 64 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags3

|

||||

# Given: (articles=[{'tags': ['', '', '', '']}, {'tags': ['', '', '', '']}, {'tags': ['', '', '']}, {'tags': ['', '', '', '']}, {'tags': ['', '', '']}, {}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : raises KeyError()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags3 : returns set()

|

||||

# Given: (articles=[{'\x00\x00\x00\x00': ['', ''], 'tags': [], '': []}, {}, {'\x00\x00\x00\x00': ['', ''], '': []}, {'': []}, {'\x00\x00\x00\x00': ['', ''], 'tags': [], '': []}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : returns set()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags3 : raises KeyError()

|

||||

|

||||

|

||||

def find_common_tags3(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article["tags"])

|

||||

if not common_tags:

|

||||

break

|

||||

return common_tags

|

||||

|

||||

|

||||

# % crosshair diffbehavior --max_uninteresting_iterations 64 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags4

|

||||

# Given: (articles=[{'\x00\x00\x00\x00': ['', ''], 'tags': [], '': []}, {}, {'\x00\x00\x00\x00': ['', ''], '': []}, {'': []}, {'\x00\x00\x00\x00': ['', ''], 'tags': [], '': []}]),

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags : returns set()

|

||||

# code_to_optimize.bubble_sort_typed.find_common_tags4 : raises KeyError()

|

||||

|

||||

|

||||

def find_common_tags4(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

common_tags &= set(article["tags"])

|

||||

if not common_tags: # Early exit if no common tags.

|

||||

break

|

||||

return common_tags

|

||||

|

||||

|

||||

def with_pattern(pattern: str, regex_group_count: int | None = None) -> Callable:

|

||||

def decorator(func: Callable) -> Callable:

|

||||

func.pattern = pattern

|

||||

func.regex_group_count = regex_group_count

|

||||

return func

|

||||

|

||||

return decorator

|

||||

|

||||

|

||||

def with_pattern2(pattern: str, regex_group_count: int | None = None) -> Callable:

|

||||

return (

|

||||

lambda func: setattr(func, "pattern", pattern)

|

||||

or setattr(func, "regex_group_count", regex_group_count)

|

||||

or func

|

||||

)

|

||||

|

||||

|

||||

"""

|

||||

We have this original code:

|

||||

```

|

||||

def find_common_tags(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = articles[0]["tags"]

|

||||

for article in articles[1:]:

|

||||

common_tags = [tag for tag in common_tags if tag in article["tags"]]

|

||||

return set(common_tags)

|

||||

```

|

||||

|

||||

We generated optimized code:

|

||||

```

|

||||

def find_common_tags2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article["tags"])

|

||||

return common_tags

|

||||

```

|

||||

|

||||

When analyzed the optimized code for correctness with Concolic Testing (using CrossHair DiffBehavior), we found the following counter-example:

|

||||

```

|

||||

crosshair diffbehavior --max_uninteresting_iterations 64 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags2

|

||||

|

||||

Given: (articles=[{'tags': ['', '']}, {'tags': ['', '']}, {'tags': []}, {}]), code_to_optimize.bubble_sort_typed.find_common_tags : returns set() code_to_optimize.bubble_sort_typed.find_common_tags2 : raises KeyError()

|

||||

```

|

||||

|

||||

Fix find_common_tags2 for correctness relative to find_common_tags, making sure it is still optimized for speed.

|

||||

"""

|

||||

|

||||

"""

|

||||

To fix the `KeyError` in `find_common_tags2` and ensure it behaves like the original `find_common_tags`, we'll modify the code to handle cases where the `'tags'` key might be missing in some articles. In the original code, it seems that articles might not always have the `'tags'` key, and in such cases, the code should default to an empty list.

|

||||

|

||||

Here's the corrected and optimized version:

|

||||

|

||||

```python

|

||||

def find_common_tags2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0].get("tags", []))

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article.get("tags", []))

|

||||

return common_tags

|

||||

```

|

||||

|

||||

**Explanation:**

|

||||

|

||||

- **Use `get` with a default value:**

|

||||

- We replaced `article["tags"]` with `article.get("tags", [])`. This ensures that if the `'tags'` key is missing, it defaults to an empty list instead of raising a `KeyError`.

|

||||

- **Initialize `common_tags` properly:**

|

||||

- We initialize `common_tags` with `set(articles[0].get("tags", []))` to handle cases where the first article might not have `'tags'`.

|

||||

- **Maintain Performance Optimization:**

|

||||

- By using `set` operations, we keep the optimized performance of the function while ensuring correctness.

|

||||

|

||||

This change ensures that the function doesn't raise a `KeyError` when an article is missing the `'tags'` key, and it behaves identically to the original function for all inputs.

|

||||

"""

|

||||

|

||||

"""We have this original code:

|

||||

```

|

||||

def find_common_tags(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = articles[0]["tags"]

|

||||

for article in articles[1:]:

|

||||

common_tags = [tag for tag in common_tags if tag in article["tags"]]

|

||||

return set(common_tags)

|

||||

```

|

||||

|

||||

We generated optimized code:

|

||||

```

|

||||

def find_common_tags2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article["tags"])

|

||||

return common_tags

|

||||

```

|

||||

|

||||

When analyzed the optimized code for correctness with Concolic Testing (using CrossHair DiffBehavior), we found the following counter-example:

|

||||

```

|

||||

crosshair diffbehavior --max_uninteresting_iterations 64 code_to_optimize.bubble_sort_typed.find_common_tags code_to_optimize.bubble_sort_typed.find_common_tags2

|

||||

|

||||

Given: (articles=[{'tags': ['', '']}, {'tags': ['', '']}, {'tags': []}, {}]), code_to_optimize.bubble_sort_typed.find_common_tags : returns set() code_to_optimize.bubble_sort_typed.find_common_tags2 : raises KeyError()

|

||||

```

|

||||

|

||||

We attempted to fix this with the following candidate:

|

||||

```python

|

||||

def find_common_tags2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0].get("tags", []))

|

||||

for article in articles[1:]:

|

||||

common_tags.intersection_update(article.get("tags", []))

|

||||

return common_tags

|

||||

```

|

||||

When analyzing the optimized code for correctness with Concolic Testing (using CrossHair DiffBehavior), we found a different counter-example:

|

||||

```

|

||||

Given: (articles=[{'\x00\x00\x00\x00': [], 'tags': ['']}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {'tags': ['']}, {}, {'\x00\x00\x00\x00': [], 'tags': ['']}, {}]),

|

||||

|

||||

code_to_optimize.bubble_sort_typed.find_common_tags : raises KeyError()

|

||||

code_to_optimize.bubble_sort_typed.find_common_tags2_1 : returns set()

|

||||

```

|

||||

|

||||

Fix find_common_tags2_1 for correctness relative to find_common_tags, making sure it is still optimized for speed.

|

||||

"""

|

||||

"""To fix `find_common_tags2_1` and ensure it behaves identically to `find_common_tags`, we need to address the discrepancy in how the two functions handle the absence of the `'tags'` key and when `common_tags` becomes empty.

|

||||

|

||||

**Issue Analysis:**

|

||||

|

||||

1. **KeyError Discrepancy:**

|

||||

- The original function raises a `KeyError` when accessing `article["tags"]` if the `'tags'` key is missing.

|

||||

- Our optimized version used `article.get("tags", [])`, which avoids the `KeyError`, leading to different behavior.

|

||||

|

||||

2. **Empty `common_tags`:**

|

||||

- In the original function, if `common_tags` becomes empty, the list comprehension doesn't access `article["tags"]`, preventing a `KeyError`.

|

||||

- In the optimized version, `intersection_update` still accesses `article["tags"]` even if `common_tags` is empty, leading to unnecessary `KeyError`s.

|

||||

|

||||

**Solution:**

|

||||

|

||||

- **Mimic Original Exception Behavior:**

|

||||

- We should ensure that `find_common_tags2` raises a `KeyError` in the same situations as `find_common_tags`.

|

||||

- Avoid accessing `article["tags"]` when `common_tags` is empty, just like the original function.

|

||||

|

||||

- **Optimize by Early Exit:**

|

||||

- If `common_tags` becomes empty, further intersections won't change it.

|

||||

- We can break out of the loop early, improving performance.

|

||||

|

||||

**Corrected and Optimized Version:**

|

||||

|

||||

```python

|

||||

def find_common_tags2(articles: list[dict[str, list[str]]]) -> set[str]:

|

||||

if not articles:

|

||||

return set()

|

||||

|

||||

common_tags = set(articles[0]["tags"])

|

||||

for article in articles[1:]:

|

||||

if not common_tags:

|

||||

break # No need to continue if common_tags is empty

|

||||

common_tags.intersection_update(article["tags"])

|

||||

return common_tags

|

||||

```

|

||||

|

||||

**Explanation:**

|

||||

|

||||

- **Accessing `article["tags"]`:**

|

||||

- We use `article["tags"]` instead of `article.get("tags", [])` to ensure a `KeyError` is raised if `'tags'` is missing, matching the original function's behavior.

|

||||

|

||||

- **Early Exit on Empty `common_tags`:**

|

||||

- The `if not common_tags: break` statement checks if `common_tags` is empty.

|

||||

- If it is empty, we break out of the loop, avoiding unnecessary accesses to `article["tags"]` that could raise a `KeyError`.

|

||||

|

||||

- **Performance Optimization:**

|

||||

- By breaking early when `common_tags` is empty, we prevent further iterations and accesses.

|

||||

- Using `set` operations with `intersection_update` retains the performance benefits.

|

||||

|

||||

**Validation with Counter-Examples:**

|

||||

|

||||

- **First Counter-Example:**

|

||||

- With `articles=[{'tags': ['', '']}, {'tags': ['', '']}, {'tags': []}, {}]`, the original function returns `set()` without raising `KeyError`.

|

||||

- Our corrected `find_common_tags2` also returns `set()` without raising `KeyError` because it avoids accessing `article["tags"]` when `common_tags` is empty.

|

||||

|

||||

- **Second Counter-Example:**

|

||||

- With an input where an article lacks `'tags'` and `common_tags` is not empty, the original function raises `KeyError`.

|

||||

- Our corrected `find_common_tags2` also raises `KeyError` in this situation, matching the original function's behavior.

|

||||

|

||||

**Conclusion:**

|

||||

|

||||